Field mapping using Transformer stage:

Requirement:

field will be right justified zero filled, Take last 18 characters

Solution:

Right("0000000000":Trim(Lnk_Xfm_Trans.link),18)

Scenario 1:

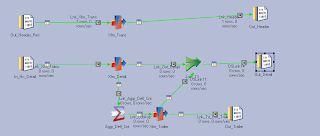

We have two datasets with 4 cols each with different names. We should create a dataset with 4 cols 3 from one dataset and one col with the record count of one dataset.

We can use aggregator with a dummy column and get the count from one dataset and do a look up from other dataset and map it to the 3 rd dataset

Something similar to the below design:

Scenario 2:

Following is the existing job design. But requirement got changed to: Head and trailer datasets should populate even if detail records is not present in the source file. Below job don't do that job.

Hence changed the above job to this following requirement:

Used row generator with a copy stage. Given default value( zero) for col( count) coming in from row generator. If no detail records it will pick the record count from row generator.

We have a source which is a sequential file with header and footer. How to remove the header and footer while reading this file using sequential file stage of Datastage?

Sol:Type command in putty: sed '1d;$d' file_name>new_file_name (type this in job before job subroutine then use new file in seq stage)

IF I HAVE SOURCE LIKE COL1 A A B AND TARGET LIKE COL1 COL2 A 1 A 2 B1. HOW TO ACHIEVE THIS OUTPUT USING STAGE VARIABLE IN TRANSFORMER STAGE?

If keyChange =1 Then 1 Else stagevaraible+1

Suppose that 4 job control by the sequencer like (job 1, job 2, job 3, job 4 )if job 1 have 10,000 row ,after run the job only 5000 data has been loaded in target table remaining are not loaded and your job going to be aborted then.. How can short out the problem.Suppose job sequencer synchronies or control 4 job but job 1 have problem, in this condition should go director and check it what type of problem showing either data type problem, warning massage, job fail or job aborted, If job fail means data type problem or missing column action .So u should go Run window ->Click-> Tracing->Performance or In your target table ->general -> action-> select this option here two option

(i) On Fail -- commit , Continue

(ii) On Skip -- Commit, Continue.

First u check how many data already load after then select on skip option then continue and what remaining position data not loaded then select On Fail , Continue ...... Again Run the job defiantly u get successful massage

Question: I want to process 3 files in sequentially one by one how can i do that. while processing the files it should fetch files automatically .

Ans:If the metadata for all the files r same then create a job having file name as parameter then use same job in routine and call the job with different file name...or u can create sequencer to use the job..

Parameterize the file name.

Build the job using that parameter

Build job sequencer which will call this job and will accept the parameter for file name.

Write a UNIX shell script which will call the job sequencer three times by passing different file each time.

RE: What Happens if RCP is disable ?

In such case Osh has to perform Import and export every time when the job runs and the processing time job is also increased...

Runtime column propagation (RCP): If RCP is enabled for any job and specifically for those stages whose output connects to the shared container input then meta data will be propagated at run time so there is no need to map it at design time.

If RCP is disabled for the job in such case OSH has to perform Import and export every time when the job runs and the processing time job is also increased.

Then you have to manually enter all the column description in each stage.RCP- Runtime column propagation

Question:

Source: Target

Eno Ename Eno Ename

1 a,b 1 a

2 c,d 2 b

3 e,f 3 c

source has 2 fields like

COMPANY LOCATION

IBM HYD

TCS BAN

IBM CHE

HCL HYD

TCS CHE

IBM BAN

HCL BAN

HCL CHE

LIKE THIS.......

THEN THE OUTPUT LOOKS LIKE THIS....

Company loc count

TCS HYD 3

BAN

CHE

IBM HYD 3

BAN

CHE

HCL HYD 3

BAN

CHE

2)input is like this:

no,char

1,a

2,b

3,a

4,b

5,a

6,a

7,b

8,a

But the output is in this form with row numbering of Duplicate occurence

output:

no,char,Count

"1","a","1"

"6","a","2"

"5","a","3"

"8","a","4"

"3","a","5"

"2","b","1"

"7","b","2"

"4","b","3"

3)Input is like this:

file1

10

20

10

10

20

30

Output is like:

file2 file3(duplicates)

10 10

20 10

30 20

4)Input is like:

file1

10

20

10

10

20

30

Output is like Multiple occurrences in one file and single occurrences in one file:

file2 file3

10 30

10

10

20

20

5)Input is like this:

file1

10

20

10

10

20

30

Output is like:

file2 file3

10 30

20

6)Input is like this:

file1

1

2

3

4

5

6

7

8

9

10

Output is like:

file2(odd) file3(even)

1 2

3 4

5 6

7 8

9 10

7)How to calculate Sum(sal), Avg(sal), Min(sal), Max(sal) with out using Aggregator stage..

8)How to find out First sal, Last sal in each dept with out using aggregator stage

9)How many ways are there to perform remove duplicates function with out using Remove duplicate stage..

Scenario:

no,char

1,a

2,b

3,a

4,b

5,a

6,a

7,b

8,a

But the output is in this form with row numbering of Duplicate occurence

output:

no,char,Count

"1","a","1"

"6","a","2"

"5","a","3"

"8","a","4"

"3","a","5"

"2","b","1"

"7","b","2"

"4","b","3"

3)Input is like this:

file1

10

20

10

10

20

30

Output is like:

file2 file3(duplicates)

10 10

20 10

30 20

4)Input is like:

file1

10

20

10

10

20

30

Output is like Multiple occurrences in one file and single occurrences in one file:

file2 file3

10 30

10

10

20

20

5)Input is like this:

file1

10

20

10

10

20

30

Output is like:

file2 file3

10 30

20

6)Input is like this:

file1

1

2

3

4

5

6

7

8

9

10

Output is like:

file2(odd) file3(even)

1 2

3 4

5 6

7 8

9 10

7)How to calculate Sum(sal), Avg(sal), Min(sal), Max(sal) with out using Aggregator stage..

8)How to find out First sal, Last sal in each dept with out using aggregator stage

9)How many ways are there to perform remove duplicates function with out using Remove duplicate stage..

Scenario:

source has 2 fields like

COMPANY LOCATION

IBM HYD

TCS BAN

IBM CHE

HCL HYD

TCS CHE

IBM BAN

HCL BAN

HCL CHE

LIKE THIS.......

THEN THE OUTPUT LOOKS LIKE THIS....

Company loc count

TCS HYD 3

BAN

CHE

IBM HYD 3

BAN

CHE

HCL HYD 3

BAN

CHE

Solution:

Seqfile......>Sort......>Trans......>RemoveDuplicates..........Dataset

Sort Trans:

Key=Company create stage variable as Company1

Sort order=Asc Company1=If(in.keychange=1) then in.Location Else Company1:',':in.Location

create keychange=True Drag and Drop in derivation

Company ....................Company

Company1........................Location

RemoveDup:

Key=Company

Duplicates To Retain=Last

11)The input is

Shirt|red|blue|green

Pant|pink|red|blue

Output should be,

Shirt:red

Shirt:blue

Shirt:green

pant:pink

pant:red

pant:blue

Solution:

it is reverse to pivote stage

use

seq------sort------tr----rd-----tr----tg

in the sort stage use create key change column is true

in trans create stage variable=if colu=1 then key c.value else key v::colum

rd stage use duplicates retain last

tran stage use field function superate columns

use

seq------sort------tr----rd-----tr----tg

in the sort stage use create key change column is true

in trans create stage variable=if colu=1 then key c.value else key v::colum

rd stage use duplicates retain last

tran stage use field function superate columns

similar Scenario: :

source

col1 col3

1 samsung

1 nokia

1 ercisson

2 iphone

2 motrolla

3 lava

3 blackberry

3 reliance

Expected Output

col 1 col2 col3 col4

1 samsung nokia ercission

2 iphone motrolla

3 lava blackberry reliance

col1 col3

1 samsung

1 nokia

1 ercisson

2 iphone

2 motrolla

3 lava

3 blackberry

3 reliance

Expected Output

col 1 col2 col3 col4

1 samsung nokia ercission

2 iphone motrolla

3 lava blackberry reliance

You can get it by using Sort stage --- Transformer stage --- RemoveDuplicates --- Transformer --tgt

Ok

First Read and Load the data into your source file( For Example Sequential File )

And in Sort stage select key change column = True ( To Generate Group ids)

Go to Transformer stage

Create one stage variable.

You can do this by right click in stage variable go to properties and name it as your wish ( For example temp)

and in expression write as below

if keychange column =1 then column name else temp:',':column name

This column name is the one you want in the required column with delimited commas.

On remove duplicates stage key is col1 and set option duplicates retain to--> Last.

in transformer drop col3 and define 3 columns like col2,col3,col4

in col1 derivation give Field(InputColumn,",",1) and

in col1 derivation give Field(InputColumn,",",2) and

in col1 derivation give Field(InputColumn,",",3)

Ok

First Read and Load the data into your source file( For Example Sequential File )

And in Sort stage select key change column = True ( To Generate Group ids)

Go to Transformer stage

Create one stage variable.

You can do this by right click in stage variable go to properties and name it as your wish ( For example temp)

and in expression write as below

if keychange column =1 then column name else temp:',':column name

This column name is the one you want in the required column with delimited commas.

On remove duplicates stage key is col1 and set option duplicates retain to--> Last.

in transformer drop col3 and define 3 columns like col2,col3,col4

in col1 derivation give Field(InputColumn,",",1) and

in col1 derivation give Field(InputColumn,",",2) and

in col1 derivation give Field(InputColumn,",",3)

Scenario:

12)Consider the following employees data as source?

employee_id, salary

-------------------

10, 1000

20, 2000

30, 3000

40, 5000

Create a job to find the sum of salaries of all employees and this sum should repeat for all the rows.

The output should look like as

employee_id, salary, salary_sum

-------------------------------

10, 1000, 11000

20, 2000, 11000

30, 3000, 11000

40, 5000, 11000

employee_id, salary

-------------------

10, 1000

20, 2000

30, 3000

40, 5000

Create a job to find the sum of salaries of all employees and this sum should repeat for all the rows.

The output should look like as

employee_id, salary, salary_sum

-------------------------------

10, 1000, 11000

20, 2000, 11000

30, 3000, 11000

40, 5000, 11000

Scenario:

I have two source tables/files numbered 1 and 2.

In the the target, there are three output tables/files, numbered 3,4 and 5.

The scenario is that,

to the out put 4 -> the records which are common to both 1 and 2 should go.

to the output 3 -> the records which are only in 1 but not in 2 should go

to the output 5 -> the records which are only in 2 but not in 1 should go.

In the the target, there are three output tables/files, numbered 3,4 and 5.

The scenario is that,

to the out put 4 -> the records which are common to both 1 and 2 should go.

to the output 3 -> the records which are only in 1 but not in 2 should go

to the output 5 -> the records which are only in 2 but not in 1 should go.

sltn:src1----->copy1------>----------------------------------->output_1(only left table)

Join(inner type)----> ouput_1

src2----->copy2------>----------------------------------->output_3(only right table)

Join(inner type)----> ouput_1

src2----->copy2------>----------------------------------->output_3(only right table)

Consider the following employees data as source?

employee_id, salary

-------------------

10, 1000

20, 2000

30, 3000

40, 5000

Scenario:

Create a job to find the sum of salaries of all employees and this sum should repeat for all the rows.

The output should look like as

employee_id, salary, salary_sum

-------------------------------

10, 1000, 11000

20, 2000, 11000

30, 3000, 11000

40, 5000, 11000

sltn:

Take Source --->Transformer(Add new Column on both the output links and assign a value as 1 )------------------------> 1) Aggregator (Do group by using that new column)

2)lookup/join( join on that new column)-------->tgt.

employee_id, salary

-------------------

10, 1000

20, 2000

30, 3000

40, 5000

Scenario:

Create a job to find the sum of salaries of all employees and this sum should repeat for all the rows.

The output should look like as

employee_id, salary, salary_sum

-------------------------------

10, 1000, 11000

20, 2000, 11000

30, 3000, 11000

40, 5000, 11000

sltn:

Take Source --->Transformer(Add new Column on both the output links and assign a value as 1 )------------------------> 1) Aggregator (Do group by using that new column)

2)lookup/join( join on that new column)-------->tgt.

Scenario:

sno,sname,mark1,mark2,mark3

1,rajesh,70,68,79

2,mamatha,39,45,78

3,anjali,67,39,78

4,pavani,89,56,45

5,indu,56,67,78

out put is

sno,snmae,mark1,mark2,mark3,delimetercount

1,rajesh,70,68,79,4

2,mamatha,39,45,78,4

3,anjali,67,39,78,4

4,pavani,89,56,45,4

5,indu,56,67,78,4

seq--->trans--->seq

create one stage variable as delimiter..

and put derivation on stage as DSLink4.sno : "," : DSLink4.sname : "," : DSLink4.mark1 : "," :DSLink4.mark2 : "," : DSLink4.mark3

and do mapping and create one more column count as integer type.

and put derivation on count column as Count(delimter, ",")

scenario:

sname total_vowels_count

Allen 2

Scott 1

Ward 1

Under Transformer Stage Description:

total_Vowels_Count=Count(DSLink3.last_name,"a")+Count(DSLink3.last_name,"e")+Count(DSLink3.last_name,"i")+Count(DSLink3.last_name,"o")+Count(DSLink3.last_name,"u").

Scenario:

1)On daily we r getting some huge files data so all files metadata is same we have to load in to target table how we can load?

Use File Pattern in sequential file

2) One column having 10 records at run time we have to send 5th and 6th record to target at run time how we can send?

Can get through,by using UNIX command in sequential file filter option

How can we get 18 months date data in transformer stage?

Use transformer stage after input seq file and try this one as constraint in transformer stage :

DaysSinceFromDate(CurrentDate(), DSLink3.date_18)<=548 OR DaysSinceFromDate(CurrentDate(), DSLink3.date_18)<=546

where date_18 column is the column having that date which needs to be less or equal to 18 months and 548 is no. of days for 18 months and for leap year it is 546(these numbers you need to check).

What is differences between Force Compile and Compile ?

Diff b/w Compile and Validate?

Compile option only checks for all mandatory requirements like link requirements, stage options and all. But it will not check if the database connections are valid.

Validate is equivalent to Running a job except for extraction/loading of data. That is, validate option will test database connectivity by making connections to databases.

im having i/p as

col A

a_b_c

x_F_I

DE_GH_IF

we hav to mak it as

col1 col 2 col3

a b c

x f i

de gh if

*********************

Scenario:

Scenario:

Scenario:

1)On daily we r getting some huge files data so all files metadata is same we have to load in to target table how we can load?

Use File Pattern in sequential file

2) One column having 10 records at run time we have to send 5th and 6th record to target at run time how we can send?

Can get through,by using UNIX command in sequential file filter option

How can we get 18 months date data in transformer stage?

Use transformer stage after input seq file and try this one as constraint in transformer stage :

DaysSinceFromDate(CurrentDate(), DSLink3.date_18)<=548 OR DaysSinceFromDate(CurrentDate(), DSLink3.date_18)<=546

where date_18 column is the column having that date which needs to be less or equal to 18 months and 548 is no. of days for 18 months and for leap year it is 546(these numbers you need to check).

What is differences between Force Compile and Compile ?

Diff b/w Compile and Validate?

Compile option only checks for all mandatory requirements like link requirements, stage options and all. But it will not check if the database connections are valid.

Validate is equivalent to Running a job except for extraction/loading of data. That is, validate option will test database connectivity by making connections to databases.

How to FInd Out Duplicate Values Using Transformer?

You can capture the duplicate records based on keys using Transformer stage variables.

1. Sort and partition the input data of the transformer on the key(s) which defines the duplicate.

2. Define two stage variables, let's say StgVarPrevKeyCol(data type same as KeyCol) and StgVarCntr as Integer with default value 0

where KeyCol is your input column which defines the duplicate.

Expression for StgVarCntr(1st stg var-- maintain order):

If DSLinknn.KeyCol = StgVarPrevKeyCol Then StgVarCntr + 1 Else 1

Expression for StgVarPrevKeyCol(2nd stg var):

DSLinknn.KeyCol

3. Now in constrain, if you filter rows where StgVarCntr = 1 will give you the unique records and if you filter StgVarCntr > 1 will give you duplicate records.

My source is Like

Sr_no, Name

10,a

10,b

20,c

30,d

30,e

40,f

My target Should Like:

Target 1:(Only unique means which records r only once)

20,c

40,f

Target 2:(Records which r having more than 1 time)

10,a

10,b

30,d

30,e

How to do this in DataStage....

Scenario:1. Sort and partition the input data of the transformer on the key(s) which defines the duplicate.

2. Define two stage variables, let's say StgVarPrevKeyCol(data type same as KeyCol) and StgVarCntr as Integer with default value 0

where KeyCol is your input column which defines the duplicate.

Expression for StgVarCntr(1st stg var-- maintain order):

If DSLinknn.KeyCol = StgVarPrevKeyCol Then StgVarCntr + 1 Else 1

Expression for StgVarPrevKeyCol(2nd stg var):

DSLinknn.KeyCol

3. Now in constrain, if you filter rows where StgVarCntr = 1 will give you the unique records and if you filter StgVarCntr > 1 will give you duplicate records.

My source is Like

Sr_no, Name

10,a

10,b

20,c

30,d

30,e

40,f

My target Should Like:

Target 1:(Only unique means which records r only once)

20,c

40,f

Target 2:(Records which r having more than 1 time)

10,a

10,b

30,d

30,e

How to do this in DataStage....

**************

use aggregator and transformer stages

source-->aggregator-->transformat-->targ

perform count in aggregator, and take two op links in trasformer, filter data count>1 for one llink and put count=1 for second link.

source-->aggregator-->transformat-->targ

perform count in aggregator, and take two op links in trasformer, filter data count>1 for one llink and put count=1 for second link.

Scenario:

in my i/p source i have N no.of records

In output i have 3 targets

i want o/p like 1st rec goes to 1st targt and

2nd rec goes to 2nd target and

3rd rec goes to 3rd target again

4th rec goes to 1st taget ............ like this

do this ""without using partition techniques "" remember it.

In output i have 3 targets

i want o/p like 1st rec goes to 1st targt and

2nd rec goes to 2nd target and

3rd rec goes to 3rd target again

4th rec goes to 1st taget ............ like this

do this ""without using partition techniques "" remember it.

*****************

source--->trans---->target

in trans use conditions on constraints

mod(empno,3)=1

mod(empno,3)=2

mod(empno,3)=0

mod(empno,3)=1

mod(empno,3)=2

mod(empno,3)=0

im having i/p as

col A

a_b_c

x_F_I

DE_GH_IF

we hav to mak it as

col1 col 2 col3

a b c

x f i

de gh if

*********************

Transformer

create 3 columns with derivation

col1 Field(colA,'_',1)

col2 Field(colA,'_',2)

col3 Field(colA,'_',3)

create 3 columns with derivation

col1 Field(colA,'_',1)

col2 Field(colA,'_',2)

col3 Field(colA,'_',3)

**************

Field function divides the column based on the delimeter,

if the data in the col is like A,B,C

then

Field(col,',',1) gives A

Field(col,',',2) gives B

Field(col,',',3) gives C

if the data in the col is like A,B,C

then

Field(col,',',1) gives A

Field(col,',',2) gives B

Field(col,',',3) gives C

Scenario:

Scenario:

Scenario:

20 comments:

How to Find Out Duplicate Values Using Transformer?

another way to find the duplicate value can be using a sorter stage before transformer.

In sorter: make Cluster Key change = TRUE

on the Key

then in Transformer filter the oulput on basic of value of cluste key change which can be put in stage variable.

try it its easier

Hi,

I have one sequential file that contains two table records.i have to split into two tables.

any one can help me?

Thanks for the Great article with such a informative content….the author has done really a great job. He has shared Datastage Scenarios and solutions it’s really helpful for cracking datastage and it’s helpful for understanding datastage as well. Datastage is a tool set for designing, developing, and running applications that populateone or more tables in a data warehouse or data mart. but I found one better and effective E-learning website related to Datastage just have a look: DataStage Training

Hi Check out some solutions here https://www.youtube.com/channel/UCY_d1OdxtuYZGp8URYrajSA

keep posting more documents and are really helpfull. http://www.learnmyit.com

keep posting more documents and are really helpfull.

Hey , really this is nice link thanks for sharing this information.Running a best training institute in Delhi which provide online training for students and also provide certification courses which is very beneficial for those students who wants a bright future in IT industry.

https://techandmate.com

Howdy Mate,

Thanks for highlighting this and indicating about Datastage Scenarios and solutions where more study and thought is necessary.

Is is true the number of

people with Unix skills is really declining?

A lot of Microservices, serverless computing bandwagon is seen on the market, does it means Unix/Linux and servers will be non-existent in future?

Excellent tutorials - very easy to understand with all the details. I hope you will continue to provide more such tutorials.

Merci

Ajeeth

last Question

a_b_c

x_f_i

GE_GH_IJ

please use convert function

Convert("_","",mylink.mystring1)

then you will get out put

abc

xfi

GEGHIJ

Above solution provided by

Thanks and Regards

Phani.J

go langaunage online training

azure online training

java online training

salesforce online training

hadoop online training

Data Science online training

Is it recommended to use transformer stage after DB stage? If yes could someone mention the reason

Yet again all that considered I read it yesterday yet I had several contemplations about it and today I expected to look at it considering how it is lavishly shaped.

Internet of things Certification Courses

Are you ready to enter the cloud with Amazon Web Services? If so, you'll need to become AWS Certified. The aws certification training route has been designed so that anyone can reach this goal in a given amount of time – no matter how much education or experience you have.

fake site

Froud site

Hi Team

Can anyone post the exact solution for scenario 12 in the blog (sum of all salaries of all exployees) the solution given inn the blog is not quite clear.

Post a Comment